How to build AI Chatbots for Travel Booking that actually converts

AI chatbots for travel booking handle 80% of inquiries, cut response time to 11 seconds, and boost conversions. Learn how to build one that actually books trips.

Approximately 40% of travelers worldwide are already using AI-based tools for trip planning and booking in 2025 (Statista, 2025). That number doubled in under a year, from 11% in October 2024 to 24% by mid-2025 (Global Rescue, 2025). The AI chatbot for travel booking is at the center of this shift. But there is a wide gap between the chatbots that actually generate bookings and the ones that frustrate travelers into calling a phone number. The difference is not about how clever the responses sound. It is about whether the chatbot can access real inventory, process real transactions, and maintain context across a multi-step booking conversation. This guide covers how to build the kind that converts.

Key takeaways

- Travel chatbots handle up to 80% of customer inquiries at leading agencies, and 83% of travelers are more likely to book when AI-enhanced services are offered (WifiTalents, 2025).

- The AI chatbot market is projected to reach $2.8 billion in 2025, growing at 28.5% CAGR through 2030 (Juniper Research, 2024).

- A production-grade AI chatbot for travel booking requires four components: an LLM reasoning layer, tool-use APIs connected to live inventory, a conversation memory store, and a human escalation pipeline.

- Chatbots that access real inventory and complete bookings within the conversation convert at 3x the rate of chatbots that redirect users to a separate booking page.

- Adamo Software deployed an AI-powered travel assistant integrated into a CMS platform that reduced support team workload on common tour-related questions and drove higher booking conversions through personalized recommendations.

Three generations of travel chatbots

Not all travel chatbots are the same. Understanding the three generations helps clarify what to build and what to avoid.

Generation 1: Rule-based chatbots. These follow scripted decision trees. The traveler selects from predefined options (“Check flight status,” “Cancel booking,” “Talk to agent”), and the bot routes them through a fixed flow. They work for simple FAQ deflection but cannot handle open-ended queries. If a traveler asks “I need a beachfront hotel in Phuket for 4 nights under $100 with a pool,” a rule-based bot cannot help. Most travel chatbots deployed before 2023 fall into this category.

Generation 2: Generative AI chatbots. These use large language models (GPT-4, Claude, Gemini) to understand natural language and generate human-like responses. They can interpret complex queries, maintain conversational context, and provide detailed answers. The limitation: most generative chatbots are information-only. They can recommend destinations, explain cancellation policies, and draft itineraries, but they cannot check live availability or complete a booking. They are knowledgeable assistants without hands.

Generation 3: Agentic travel chatbots. These combine LLM reasoning with tool-use capabilities. The chatbot does not just understand the traveler’s request. It calls APIs to search real inventory, checks availability in real time, creates bookings, processes payments, and sends confirmations, all within the conversation. Expedia Group’s AI service agent handles over 143 million conversations annually, and more than 50% of travelers self-serve their requests without needing to call in (Expedia Group, 2025). This is the generation that drives conversion.

The ROI difference between generations is significant. According to industry data, chatbots in travel agencies handle up to 80% of customer inquiries, and agencies that deploy AI-enhanced booking experiences see 83% higher likelihood of booking completion (WifiTalents, 2025). Modern AI chatbots deliver first responses in an average of 11 seconds, compared to 4+ hours for email support (Hyperleap AI, 2025). The business case is clear: build Generation 3, or accept that the chatbot is a cost center, not a revenue driver.

Architecture of a production-grade AI chatbot for travel booking

A travel chatbot that books trips requires four interconnected components. Removing any one of them reduces the chatbot from a booking agent to a conversation widget.

Component 1: LLM reasoning layer

The LLM interprets the traveler’s intent, determines which actions to take, and generates natural responses. For travel use cases, the LLM must handle several specific challenges:

- Multi-intent queries: “Find me a flight from Hanoi to Tokyo next Friday, and a hotel near Shibuya for 3 nights, preferably with a gym” contains at least three distinct intents (flight search, hotel search, amenity filter).

- Ambiguity resolution: “Somewhere warm in July for under $2000” requires the LLM to infer destination options, not just parrot the query.

- Context maintenance across turns: the traveler may refine their request over 5 to 10 messages. The LLM must remember that “make it a window seat” refers to the flight discussed three messages ago.

The recommended approach: use a foundation model (GPT-4, Claude, or Gemini) via API, wrapped in an orchestration layer that manages tool routing. Do not fine-tune the model on travel data unless you have a very specific domain need. Prompt engineering with well-structured system prompts and tool definitions is more cost-effective and maintainable.

Component 2: Tool-use APIs for live inventory

This is what separates a chatbot that converts from one that merely converses. The LLM must be able to call real systems:

- Search flights via GDS APIs (Amadeus, Sabre) or airline NDC connections

- Search hotels via supplier APIs, OTA aggregators, or the property’s own booking engine

- Check real-time availability with up-to-date pricing

- Create bookings with traveler details, payment processing, and confirmation generation

- Modify reservations (date changes, room upgrades, cancellations)

- Send notifications (email confirmations, SMS updates, push notifications)

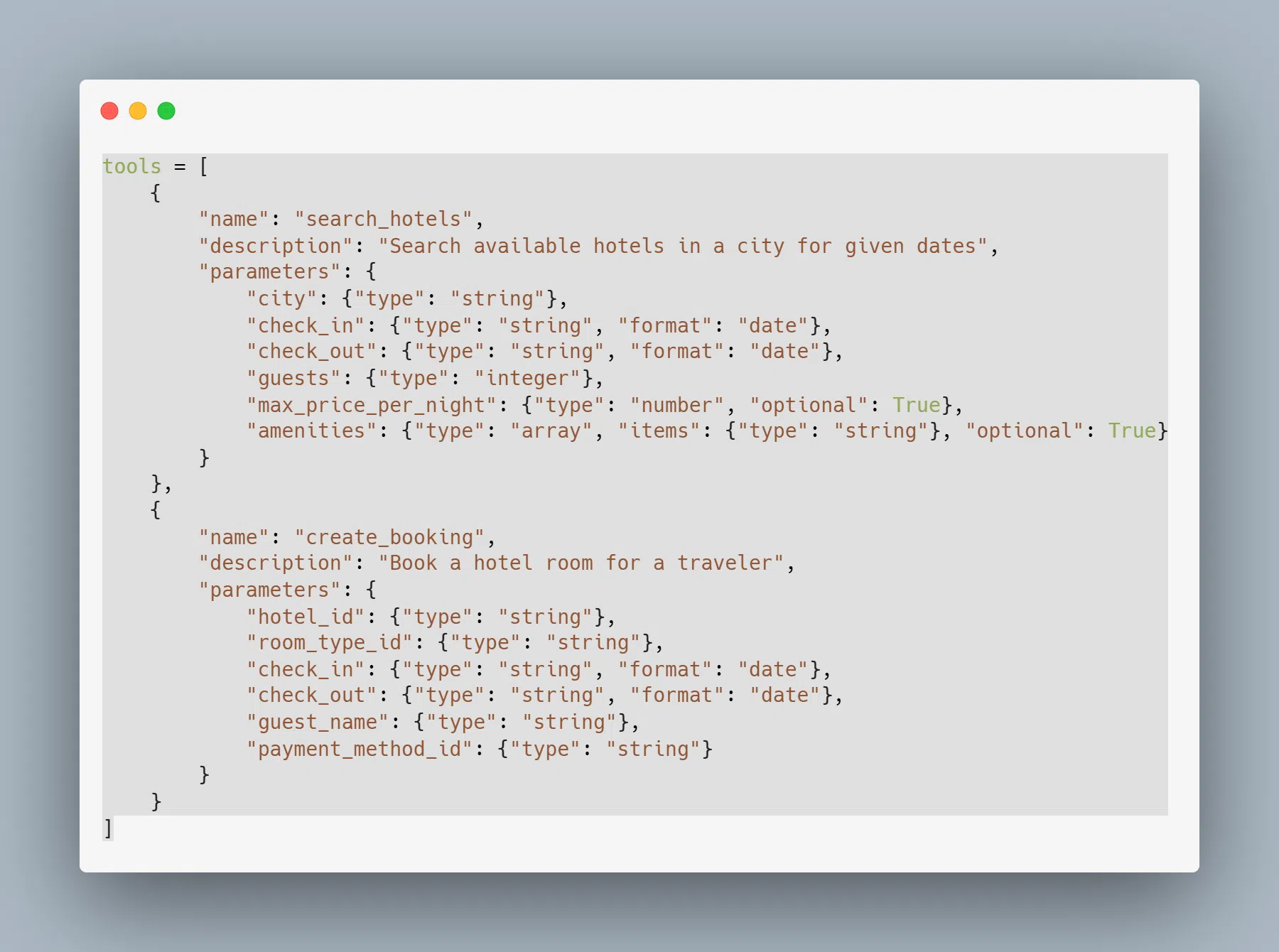

Each tool is defined with a structured schema that the LLM can interpret and invoke:

The integration with GDS providers and booking engines is the most technically demanding part. GDS APIs enforce rate limits, return data in inconsistent formats, and require careful caching strategies. For a detailed breakdown of GDS integration patterns, including authentication, parallel search, and data normalization, see our AI-powered travel booking platform guide.

Component 3: Conversation memory

A travel booking conversation typically spans 5 to 15 messages. The chatbot must maintain a structured memory of:

- The traveler’s stated preferences (destination, dates, budget, group size)

- Search results already presented (so it does not repeat itself)

- Decisions made (the traveler chose Hotel A over Hotel B)

- Booking state (pending, confirmed, modified)

- Traveler profile data (loyalty tier, past bookings, saved payment methods)

Short-term memory (within a session) is handled by the LLM’s context window. Long-term memory (across sessions) requires a persistent store, typically a combination of Redis for session state and PostgreSQL for traveler profiles. The memory layer is what enables interactions like: “Book the same hotel I stayed at in Bangkok last March, but for 5 nights this time.”

Component 4: Human escalation pipeline

Even the best AI chatbot for travel booking cannot handle every scenario. Complex group bookings, disputes, payment failures, and emotionally charged situations (cancelled honeymoon trips) require human agents. The escalation pipeline must:

- Detect when the chatbot’s confidence drops below a threshold (typically 70 to 80% for booking actions)

- Transfer full conversation context to the human agent, so the traveler does not repeat themselves

- Allow the human agent to take over seamlessly within the same chat interface

- Log the escalation reason for continuous model improvement

According to Hyperleap AI (2025), only 13% of AI chatbot conversations require escalation to human agents, a 60% reduction from traditional chat. The goal is not zero escalations. It is intelligent escalation that preserves the traveler’s experience.

Case study: Adamo Software’s AI-powered travel assistant

Adamo Software built and deployed an AI-powered travel assistant integrated directly into a client’s CMS platform. The client operated a tours and activities business where customers struggled to browse and filter through hundreds of tour options manually. Support teams spent significant time answering repetitive tour-related questions.

The solution had three components:

- Personalized tour recommendation engine. Adamo Software built an AI module that understands user queries and preferences, then recommends tours that best match their needs using real-time CMS data. Instead of browsing static category pages, travelers describe what they want in natural language.

- Natural language assistant integrated into the CMS. Users interact with the assistant to ask about tours, compare packages, and find deals. The assistant accesses live tour data (availability, pricing, itineraries) from the CMS database and presents relevant options within the conversation.

- Conversion optimization through personalization. With personalized suggestions and immediate access to relevant information, users find what they need faster, resulting in higher booking rates and better overall customer satisfaction. Support team workload on common tour-related questions dropped significantly.

The technology stack included Python and OpenAI APIs for the AI layer, integrated with the client’s existing CMS platform. The workflow follows a clear path: user query, preference processing, tour data retrieval, personalized suggestions, and booking completion, all within a single conversational interface.

Key metrics to track

Building the chatbot is half the work. Measuring whether it actually drives bookings is the other half. Track these metrics from day one:

- Containment rate. The percentage of conversations the chatbot resolves without human escalation. Target: 80%+ for routine inquiries (availability checks, booking confirmations, FAQ). Current industry benchmark: 87% resolution without human intervention (Hyperleap AI, 2025).

- Booking conversion rate. The percentage of chatbot conversations that result in a completed booking. This is the metric that matters most. Track it separately from website conversion rate to isolate the chatbot’s impact.

- Average conversation length. Measured in messages and time. Shorter is not always better. A 10-message conversation that ends in a $2,000 booking is more valuable than a 2-message conversation that ends in deflection.

- First response time. The time between the traveler’s first message and the chatbot’s first reply. Target: under 3 seconds. Industry benchmark: 11 seconds average (Hyperleap AI, 2025). Faster first responses correlate strongly with higher completion rates.

- Escalation rate. The percentage of conversations transferred to human agents. Track the reasons for escalation to identify gaps in the chatbot’s capabilities. If 30% of escalations are about group bookings, that is the next feature to build.

- Revenue per conversation. Total booking revenue attributed to chatbot conversations divided by total conversations. This normalizes for traffic volume and lets you compare chatbot ROI against other channels.

Common mistakes that kill chatbot conversion (and how to fix them)

Mistake 1: No live inventory access

The problem. The chatbot discusses travel options using its training data or static content, but cannot check actual availability or real-time pricing. The traveler asks “Are there rooms available at the Marriott Courtyard Shibuya for July 12 to 15?” and the chatbot responds with generic information about the hotel, then directs the traveler to a booking page to check availability. The traveler clicks through, discovers the room is sold out or priced differently than expected, and leaves. Trust is broken. The chatbot generated work (an engaged user navigating to the booking page) but not value (a completed booking).

The solution. Connect the chatbot to live inventory through tool-use APIs. Every availability claim the chatbot makes must come from a real-time API call, not from the LLM’s knowledge. The architecture requires a search tool that queries the booking engine or GDS provider, returns structured results (room types, rates, availability status), and feeds those results into the LLM for natural language formatting. The chatbot should never say “this hotel is available” unless the API confirmed it within the last 5 minutes. Implement a cache layer (Redis, TTL 5 minutes for hotels, shorter for flights) to balance API rate limits with data freshness. When cached data is served, append a subtle indicator: “Prices shown are from a few minutes ago. Final price confirmed at checkout.”

Mistake 2: Forcing a channel switch at the point of conversion

The problem. The chatbot handles the discovery phase well. It understands what the traveler wants, presents relevant options, answers follow-up questions. Then, at the exact moment the traveler is ready to book: “To complete your reservation, please visit our booking page at [link].” Every channel switch at this stage loses 40 to 60% of engaged users. The traveler landed on the chatbot because they preferred conversational interaction over form-based booking. Sending them to a traditional booking form contradicts the entire value proposition.

The solution. Implement end-to-end booking within the conversation. The chatbot collects traveler details (name, email, dates), confirms the selection, and processes payment without the traveler leaving the chat interface. For payment, two approaches work. The first is an inline payment link: the chatbot generates a secure, pre-filled payment link (via Stripe Checkout or Adyen Drop-in) that opens in a modal or new tab with all booking details pre-populated. The traveler completes payment and returns to the chat with a confirmation. The second is tokenized payment: for returning travelers with saved payment methods, the chatbot confirms the booking with a single “Confirm and pay with your Visa ending in 4242” message. Both approaches keep the traveler inside the conversational flow. The key technical requirement is a booking API that accepts requests from the chatbot service with the same authentication and validation as the web booking engine.

Mistake 3: Losing conversation context across turns

The problem. The traveler says: “I need a hotel in Shibuya, under $150 per night, with breakfast included, for 3 nights starting July 12.” The chatbot presents three options. The traveler asks: “What about the second one, does it have a gym?” The chatbot responds with a generic answer about gyms in Shibuya hotels, ignoring that “the second one” refers to a specific hotel from the previous response. Or worse, the traveler says “Actually, make it 4 nights instead of 3” and the chatbot asks “4 nights at which hotel?” despite only one hotel being under discussion. Context loss is the fastest way to make an AI chatbot feel robotic and unreliable.

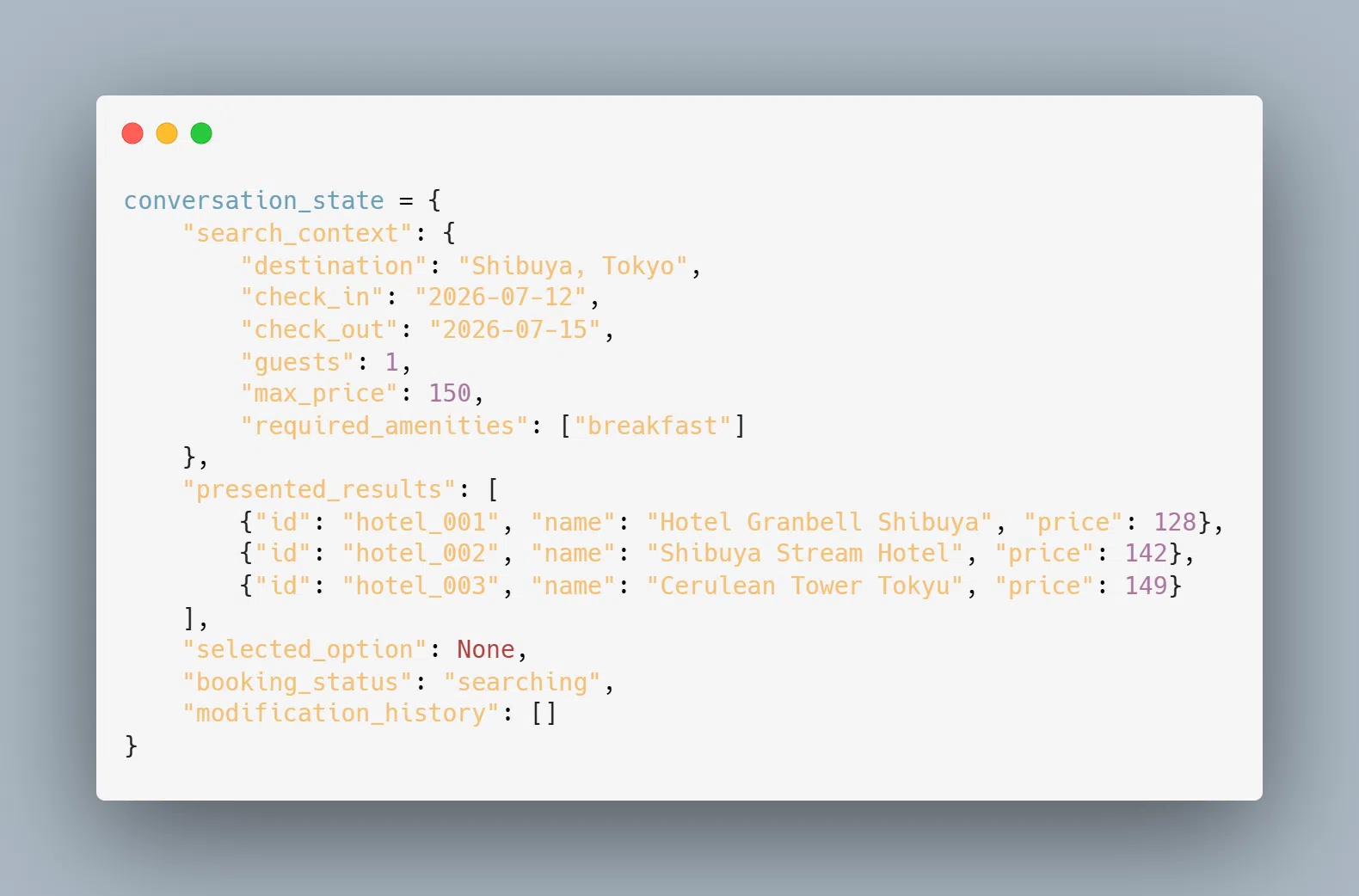

The solution. Implement a structured conversation state object that persists across turns. This is separate from the LLM’s context window (which handles natural language understanding). The state object tracks:

When the traveler says "the second one," the system resolves that tohotel_002frompresented_results. When they say "make it 4 nights," the system updatescheck_outinsearch_contextand re-queries availability for the hotel under discussion. The LLM receives both the conversation history and the structured state, so it can generate contextually accurate responses without relying on its own memory of previous turns (which degrades in longer conversations).

Mistake 4: English-only implementation in a global market

The problem. 74% of global businesses cite multilingual support as a critical requirement for their AI chatbot implementations (Hyperleap AI, 2025). A travel chatbot that only works in English excludes the majority of the global traveler population. A Japanese traveler searching for hotels in Vietnam, a French family planning a trip to Thailand, a Brazilian couple booking a tour in Indonesia: all of them will engage more deeply and convert at higher rates if the chatbot communicates in their language.

The solution. Modern LLMs (GPT-4, Claude, Gemini) handle multilingual conversations natively. The chatbot does not need separate language models or translation layers. It detects the traveler’s language from their first message and responds in the same language. The technical requirements are: first, ensure the system prompt instructs the LLM to respond in the traveler’s language. Second, translate tool-use output (hotel names, amenity descriptions, policies) using the LLM itself or a dedicated translation service for high-accuracy fields like legal terms and cancellation policies. Third, store the detected language in the conversation state so every subsequent response, including error messages and confirmation emails, uses the correct language. Fourth, test with native speakers for the top 5 to 8 languages your traveler base uses. LLMs are fluent but not flawless; Japanese honorifics, Arabic right-to-left formatting, and Vietnamese diacritics all need QA.

Mistake 5: Using the LLM as the source of truth for factual data

The problem. LLMs hallucinate. It is not a bug. It is a fundamental characteristic of how language models generate text. A travel chatbot powered by an LLM will, at some point, confidently state that a hotel has a rooftop pool when it does not, that a flight departs at 10:30 AM when the actual departure is 10:30 PM, or that a cancellation is free when the policy charges 50%. In a travel context, hallucinated facts lead to booking errors, customer complaints, and refund costs.

The solution. Enforce a strict separation between the LLM’s role and the data layer’s role. The LLM handles natural language understanding (parsing the traveler’s intent), conversation management (deciding what to ask or present next), and response formatting (turning structured data into natural language). The data layer (APIs, databases, cached inventory) provides every factual claim: prices, availability, amenities, policies, schedules. The LLM never generates a price, a time, or a policy statement from its training data. It only reformats what the API returns.

Implement this separation through a validation pipeline: before any response reaches the traveler, a lightweight checker verifies that every numerical claim (price, time, distance, capacity) traces back to an API response in the current session. If the LLM introduces a claim that does not match any API data, the response is flagged and regenerated. This adds 200 to 500ms of latency but eliminates the class of errors that destroy trust. In Adamo Software’s implementations, this validation layer catches 3 to 5% of responses that would otherwise contain hallucinated details.

Conclusion

The AI chatbot for travel booking has passed the point where it is a “nice to have” feature. With 40% of travelers already using AI for trip planning, chatbots handling 80% of agency inquiries, and 83% of travelers more likely to book when AI services are present, the conversion impact is measurable and significant. The technical bar, however, is high. A chatbot that merely converses is not enough. The system must access live inventory, process real transactions, maintain conversation context, and escalate gracefully when it reaches its limits. The case study from Adamo Software’s travel assistant deployment demonstrates that even a focused implementation (tour recommendations + natural language search + CMS integration) can meaningfully reduce support workload and increase booking rates. The companies that build this capability now will compound their data advantage with every conversation.

Build an AI Chatbot That Books Travel, Not Just Talks About It

Adamo Software builds AI chatbots for travel platforms with live inventory access, LLM-powered natural language understanding, and end-to-end booking capabilities. From tour recommendation engines to full booking agents integrated with GDS providers, our team delivers chatbots that drive revenue, not just deflect support tickets.

- Explore our services: https://adamosoft.com/ai-development-services/

- Contact us for a free consultation: https://adamosoft.com/contact-us/