AI Travel Assistant Development: A complete guide to building intelligent booking systems

The AI travel assistant market will reach $1.13B by 2030. Learn how to build an AI travel assistant with LLM orchestration, tool-use APIs, and live booking capabilities.

AI travel assistant development is no longer an innovation project. It is a competitive necessity. The AI-powered personal travel assistant market was valued at $756.76 million in 2025 and is projected to reach $1.13 billion by 2030, growing at a CAGR of 8.4% (360iResearch, 2025). According to TakeUp’s 2026 consumer research, 90% of travelers are already aware that AI tools can help plan or book travel, and 78% of those who have used an AI assistant booked travel based primarily on its recommendation (TakeUp, 2026). For travel companies, the question has shifted from “should we build an AI assistant” to “how fast can we build one that actually books trips.”

This guide covers the complete AI travel assistant development process: what to build, how to architect it, which features to prioritize, and what separates assistants that convert from those that merely converse.

Key Takeaways

- The AI-powered personal travel assistant market was valued at $756.76 million in 2025 and is projected to reach $1.13 billion by 2030, growing at 8.4% CAGR (360iResearch, 2025).

- 90% of travelers are aware that AI can help plan or book travel. Among those who have tried it, 63% rely on it for most or every trip and 94% trust AI recommendations as much as traditional sources (TakeUp, 2026).

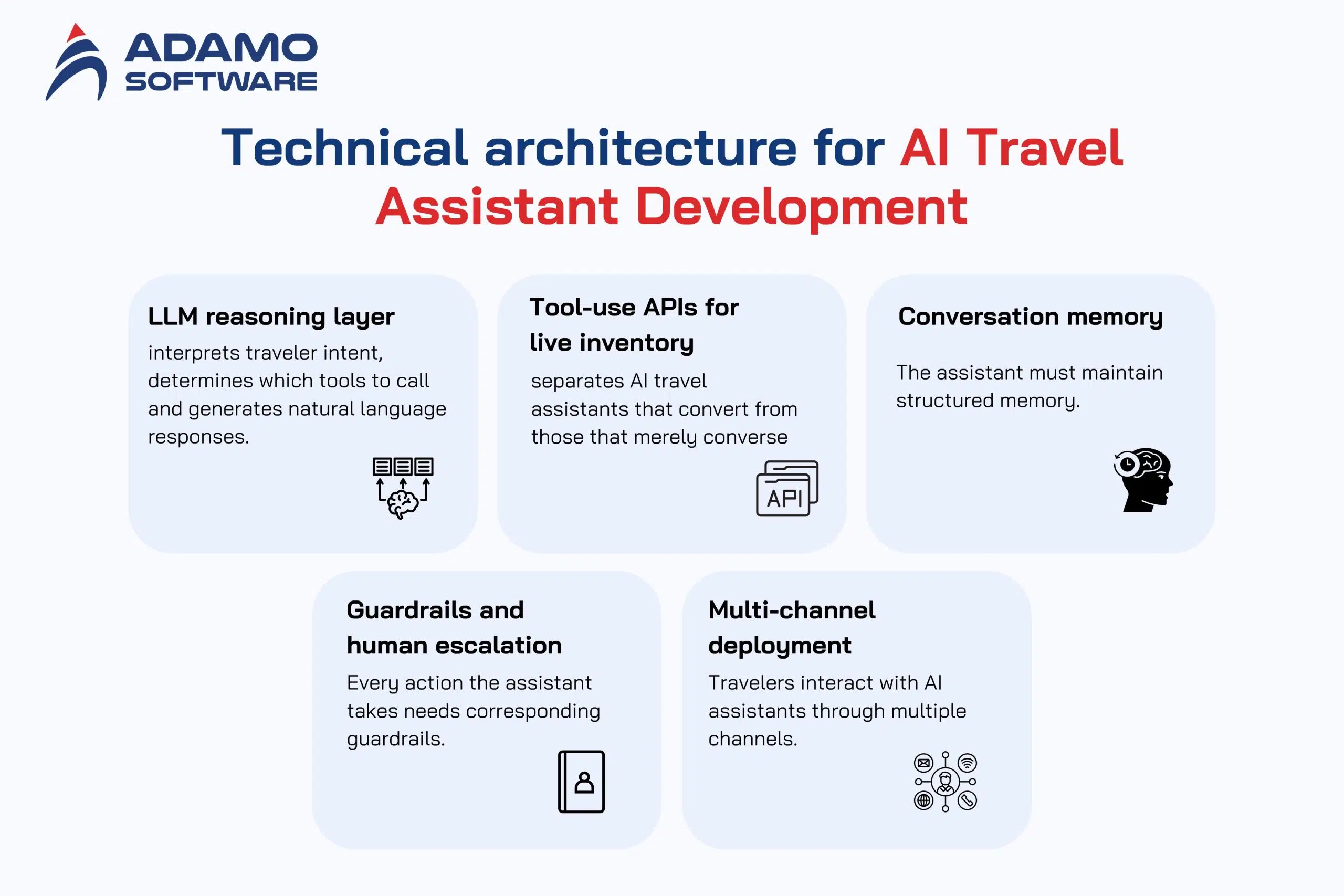

- A production-grade AI travel assistant requires five core components: LLM reasoning layer, tool-use APIs for live inventory, conversation memory, guardrails with human escalation, and multi-channel deployment.

- 78% of travelers who use AI assistants have booked travel based primarily on an AI recommendation (TakeUp, 2026). Properties that do not appear in AI-generated recommendations risk losing visibility and bookings.

- Development timeline for a full-featured AI travel assistant: 4 to 7 months with a team of 6 to 10 engineers, depending on integration complexity with GDS providers and booking systems.

What separates a travel assistant from a travel chatbot

The terms “AI travel assistant” and “travel chatbot” are often used interchangeably, but they describe fundamentally different systems. Understanding the distinction is critical before starting development.

A travel chatbot responds to predefined queries. It answers questions about hotel amenities, flight status, or cancellation policies. It operates within a fixed scope and redirects users to a website or phone line when requests exceed that scope. Most travel chatbots deployed before 2024 fall into this category.

An AI travel assistant acts. It understands natural language queries (“Find me a beachfront hotel in Da Nang for 4 nights under $100 with a pool and breakfast”), searches real inventory across multiple suppliers, compares options, presents recommendations with reasoning, completes bookings with payment processing, and handles post-booking modifications and disruptions. It maintains context across conversations, remembers traveler preferences across sessions, and improves its recommendations with every interaction.

The technology gap between these two is significant. A chatbot requires scripted decision trees and FAQ databases. An AI travel assistant requires LLM orchestration, tool-use APIs connected to live booking systems, conversation memory, confidence-based escalation logic, and multi-channel deployment infrastructure.

The business impact gap is even larger. According to industry data, AI chatbot for travel booking implementations that access real inventory and complete bookings within the conversation convert at significantly higher rates than those that redirect users. Expedia Group’s AI service agent handles over 143 million conversations annually, with more than 50% of travelers self-serving without calling in (Expedia Group, 2025).

The AI travel assistant market in 2026

Three market forces are driving investment in AI travel assistant development:

Traveler behavior has shifted permanently. 40% of travelers worldwide are already using AI-based tools for trip planning (Statista, 2025). Among those who have tried AI assistants, 63% now rely on them for most or every trip, and 96% say they will use AI again for future travel planning (TakeUp, 2026). The adoption curve is not gradual. It is exponential: AI usage for travel doubled from 11% to 24% between October 2024 and mid-2025 (Global Rescue, 2025).

Trust has crossed the threshold. 94% of AI users trust AI-generated travel recommendations at least as much as traditional sources like search engines and OTA reviews (TakeUp, 2026). 84% say a trusted AI recommendation would make them more likely to book a specific property. The trust gap that held back adoption for years is closing rapidly.

The competitive window is narrowing. Sabre, PayPal, and MindTrip announced a partnership in February 2026 to build the travel industry’s first end-to-end agentic booking pipeline, covering 420+ airlines and 2 million hotel properties (OAG, 2026). Google is developing agentic booking tools within its AI Mode. Amadeus, Microsoft, and Accenture launched a trip-planning agent inside Microsoft Teams. Companies that do not build AI assistant capabilities now will be competing against these platforms with a multi-year head start.

Technical architecture for AI travel assistant development

A production-grade AI travel assistant consists of five interconnected components. Each one is essential. Removing any single component reduces the system from a booking agent to a conversation widget.

1. LLM reasoning layer

The LLM is the brain of the assistant. It interprets traveler intent, determines which tools to call, sequences multi-step workflows, and generates natural language responses.

For travel use cases, the LLM must handle multi-intent queries (a single message containing flight, hotel, and activity requests), ambiguity resolution (converting “somewhere warm in July under $2000” into searchable parameters), and context maintenance across 5 to 15 conversation turns.

The recommended approach is to use a foundation model (GPT-4, Claude, or Gemini) via API with a well-structured system prompt and tool definitions. Fine-tuning is rarely necessary for travel assistants. Prompt engineering with clear tool schemas is more cost-effective and maintainable. Adamo Software’s experience with AI-powered travel booking platform projects confirms that prompt engineering with structured tool definitions outperforms fine-tuned models for most travel booking scenarios.

2. Tool-use APIs for live inventory

This component separates AI travel assistants that convert from those that merely converse. The LLM must call real systems through structured tool definitions:

- Search flights via GDS integration with Amadeus, Sabre, or airline NDC connections

- Search hotels via supplier APIs, OTA aggregators, or the property’s own booking engine

- Check real-time availability with current pricing

- Create bookings with traveler details and payment processing

- Modify reservations (date changes, room upgrades, cancellations)

- Send confirmations via email, SMS, or push notification

Each tool is defined with a typed schema that the LLM interprets and invokes. The integration with GDS providers is the most technically demanding part of AI travel assistant development. GDS APIs enforce rate limits, return data in inconsistent formats across providers, and require caching strategies to balance data freshness with quota constraints.

3. Conversation memory

Travel booking conversations typically span 5 to 15 messages. The assistant must maintain structured memory of stated preferences (destination, dates, budget), search results already presented, decisions made, booking state, and traveler profile data (loyalty tier, past bookings, saved payment methods).

Short-term memory (within a session) is handled by the LLM’s context window. Long-term memory (across sessions) requires a persistent store: Redis for session state and PostgreSQL for traveler profiles. Long-term memory enables interactions like: “Book the same hotel I stayed at in Bangkok last March, but for 5 nights this time.”

4. Guardrails and human escalation

Autonomous booking actions carry real financial risk. Every action the assistant takes needs corresponding guardrails:

- Confidence thresholds: if the assistant’s confidence drops below 75 to 80%, it pauses and escalates to a human agent with full context

- Business rule enforcement: blackout dates, minimum stay requirements, rate parity rules, cancellation deadlines

- Transaction limits: maximum booking values and maximum autonomous modifications per trip

- Audit logging: every autonomous action logged with the reasoning chain, data accessed, and decision made

According to Hyperleap AI (2025), only 13% of AI chatbot conversations require human escalation, a 60% reduction from traditional chat support. The goal is intelligent escalation, not zero escalation.

5. Multi-channel deployment

Travelers interact with AI assistants through multiple channels: website chat widgets, mobile apps, messaging platforms (WhatsApp, Facebook Messenger), voice interfaces, and increasingly through third-party AI assistants (ChatGPT, Claude, Gemini) via MCP protocol.

The assistant’s core logic (LLM orchestration, tool-use, memory) should be channel-agnostic. A single backend serves all channels. The presentation layer adapts to each channel’s constraints (character limits for SMS, rich cards for web, voice synthesis for phone).

MCP (Model Context Protocol) support is becoming critical. When a traveler asks ChatGPT or Claude to “find me a hotel in Shibuya,” the AI assistant needs a protocol to discover and query hotel inventory. Travel platforms that expose their inventory via MCP endpoints allow external AI assistants to search and book directly. This represents a new distribution channel that bypasses traditional OTA intermediaries. For more on this topic, see our guide on agentic AI in travel.

Feature prioritization framework

Not every feature delivers equal value at launch. Based on Adamo Software’s experience with travel software development projects, here is the recommended build sequence:

Phase 1: Search and recommend (weeks 1 to 8). The assistant can understand natural language queries, search real inventory across suppliers, present ranked results with reasoning, and answer follow-up questions about specific properties. This phase delivers immediate value by reducing the time travelers spend browsing and comparing options.

Phase 2: End-to-end booking (weeks 6 to 14). The assistant collects traveler details, processes payment within the conversation, creates confirmed bookings, and sends confirmation notifications. This is the revenue-generating phase. Every channel switch (redirecting to a website for booking) loses 40 to 60% of engaged users. Completing the booking inside the conversation is the single highest-impact feature.

Phase 3: Post-booking management (weeks 10 to 18). The assistant handles modification requests (date changes, room upgrades), cancellation processing, disruption management (flight cancellations, rebooking), and proactive notifications (gate changes, check-in reminders). This phase drives retention and customer satisfaction.

Phase 4: Personalization and autonomy (weeks 14 to 24). The assistant uses historical data to personalize recommendations, proactively suggests trips based on travel patterns, manages loyalty programs, and operates at graduated autonomy levels (suggest, act-with-notification, full autonomy). This phase compounds value with every interaction.

Total timeline for a production-ready AI travel assistant: 4 to 7 months with a dedicated team of 6 to 10 engineers, depending on the number of supplier integrations and the depth of custom travel solutions required.

Technology stack recommendations

Based on Adamo Software’s AI development services delivery experience, here are the technology choices validated in production:

- LLM provider: OpenAI (GPT-4o) or Anthropic (Claude) via API. Both support tool-use natively. Selection depends on latency requirements and pricing at scale.

- Orchestration: LangChain or LlamaIndex for tool routing and multi-step workflows. For simpler implementations, a custom orchestrator with direct API calls offers more control and lower latency.

- Backend: Python (FastAPI) for the AI orchestration layer. Node.js (Fastify) for the booking and search services that require high concurrency.

- Database: PostgreSQL for transactional data (bookings, user profiles). Redis for session state and search result caching.

- Search index: Elasticsearch for full-text search across normalized inventory.

- Event streaming: Apache Kafka for real-time data flow between the AI layer, booking services, and notification systems.

- Infrastructure: Docker and Kubernetes on AWS EKS or GCP GKE. Auto-scaling policies tied to conversation volume.

- Monitoring: OpenTelemetry for distributed tracing across the LLM layer, tool-use calls, and booking services. Essential for debugging multi-step AI workflows.

Key metrics to track

- Booking conversion rate: Percentage of conversations that result in a completed booking. The most important metric.

- Containment rate: Percentage resolved without human escalation. Target: 80%+ for routine inquiries.

- First response time: Target under 3 seconds. Industry benchmark: 11 seconds average (Hyperleap AI, 2025).

- Revenue per conversation: Total booking revenue divided by total conversations. Normalizes for traffic volume.

- Recommendation accuracy: Percentage of recommendations that match stated preferences. Tracked via user acceptance rate.

- Hallucination rate: Percentage of responses containing factual errors not supported by API data. Target: under 1% after validation pipeline implementation.

Conclusion

AI travel assistant development has moved from experimental to essential. The market data is unambiguous: $756 million in 2025 growing to $1.13 billion by 2030, 90% traveler awareness, 78% booking conversion among AI users, and 94% trust in AI recommendations. The technical architecture is well-defined: LLM orchestration, tool-use APIs, conversation memory, guardrails, and multi-channel deployment. The competitive landscape is accelerating, with Sabre, Google, Amadeus, and Booking.com all deploying production-grade AI assistants simultaneously. For travel companies building custom platforms, the development window is now. The companies that ship a production-ready AI travel assistant in the next 6 months will compound their data advantage with every conversation, making it progressively harder for late entrants to catch up.

Build Your AI Travel Assistant With Adamo

Adamo Software develops custom AI travel assistants with live inventory access, LLM-powered natural language booking, and multi-supplier integration. From search and recommendation engines to fully autonomous booking agents connected to GDS providers and payment systems, our team delivers assistants that drive revenue across web, mobile, and AI assistant channels.

- Explore our services: https://adamosoft.com/travel-and-hospitality-software-development/

- Contact us for a free consultation: https://adamosoft.com/contact-us/